| With SSIS, most of the time we need to process all the files from the folder. Once file has been processed, we need to move the file to archive folder, so we should know that file has been processed and we have the file in archive folder. Here, We are going to process the files and then will move the file from source folder to archive folder by appending date and time to the filename, so we can use it for future reference. SSIS will do both of these things, Rename a file and move a file, with File System Task with operation “Rename file”. Let’s review how it works: 1. Add Variables:To configure Source folder and Archive Folder by variable

Here, we are adding Current Date time to the filename by expression as mentioned below.

3. Add For each Loop Container and set properties :Now, lets loop thru the folder and process each file from the folder by “For Each loop”. Here, we can setup the folder by Expressions->Directory use Expression @[User::SourceFolder]. We should also specify which type of files we are going to process, like “txt”, “csv” etc..by specifying the same in “Files”. We are fetching the file name with extension so that option needs to be selected as displayed in the following screenshot.  We need to assign each file name to the variable, by Variable Mappings->set variable [User::FileName] and Index as 0  4. Add File System Task:Add “File System Task” inside “For each Loop Container”  5. Set properties for File System Task:This is the place where we need to setup the operation, which will do our job. A. Configure SourceVariable B. Configure DestinationVariable C. Select operation: “Rename File”, which will rename the file and move it to the Archive Folder as we have specified in variable.  6. Run package and check  With SSIS, it is much simple to process multiple files as mentioned above. Reference: Tejas Shah (www.SQLYoga.com) |

Learn SQL and database management at SQLYoga for articles, tutorials, and tips to improve your skills and streamline data operations. Join our community!

September 4, 2014

SQL SERVER: SSIS - Rename and move files from source folder to destination folder

18+ years of Hands-on Experience

MICROSOFT CERTIFIED PROFESSIONAL (Microsoft SQL Server)

Proficient in .NET C#

Hands on working experience on MS SQL, DBA, Performance Tuning, Power BI, SSIS, and SSRS

18+ years of Hands-on Experience

MICROSOFT CERTIFIED PROFESSIONAL (Microsoft SQL Server)

Proficient in .NET C#

Hands on working experience on MS SQL, DBA, Performance Tuning, Power BI, SSIS, and SSRS

September 1, 2014

SQL SERVER: SSIS - Conditional Split Data Flow Transformation Task

| Conditional Split transform use split the source row in easy to multiple groups in data flow and which Destination table populated. Lets review the same by reading a Sample text file and separate out the data in two groups. 1. Create sample text file: This text file is piped delimited and last row in text Total Row count

2. Create sample table: Create sample destination table in Test database 1: CREATE TABLE [dbo].[Employee](2: [EmployeeId] [int] IDENTITY(1,1) NOT NULL,3: [EmployeeNumber] [int] NULL,4: [EmployeeName] [varchar](50) NULL,5: CONSTRAINT [PK_Employee] PRIMARY KEY CLUSTERED6: (7: [EmployeeId] ASC8: )9: )10: GO 3. Add Data Flow Task: Add data flow task in Package for transfer data source text file “Employee.txt” to sql server table “dbo.Employee”  4. Add new Flat File Connection: Right click on Connection Manager and add new Flat File connection  5. Set Flat file source properties: Flat file source properties set Connection manager Name, File Name (Source full filepath), column names in the first data row as a true  6. Add Flat file source: Add “Flat File Source” in “Data flow Task” and set properties Flat file connection manager and retain null values from the source as null values in the data flow as true and click button on preview and see in this file extra row include in text file  7. Add Conditional Split Transformation in Data Flow Task: Add Conditional Split Transformation in data flow task for split row  8. Set Condition Split Properties: Set properties Condition split where EmployeeNumber equal to “Total Row count” then this rows not use and other row use to process.  9. Add new OLE DB Connection: Right click on Connection Managers and add new OLE DB Connection  10. Set OLE DB Connection properties OLE DB Connection properties set Server Name, Connection to a database and click on ok button  11. Add OLE DB Destination: Add “OLE DB Destination” in “Data flow Task” and set properties OLE DB Connection Manager and Name of the table or the view  12. Set Input output selection : Set Input output selection between Condition spit and OLE DB Destination   13. Run package and Check :   In this way we get split the information and use as per the requirement, Reference: Tejas Shah (www.SQLYoga.com) |

18+ years of Hands-on Experience

MICROSOFT CERTIFIED PROFESSIONAL (Microsoft SQL Server)

Proficient in .NET C#

Hands on working experience on MS SQL, DBA, Performance Tuning, Power BI, SSIS, and SSRS

18+ years of Hands-on Experience

MICROSOFT CERTIFIED PROFESSIONAL (Microsoft SQL Server)

Proficient in .NET C#

Hands on working experience on MS SQL, DBA, Performance Tuning, Power BI, SSIS, and SSRS

August 6, 2014

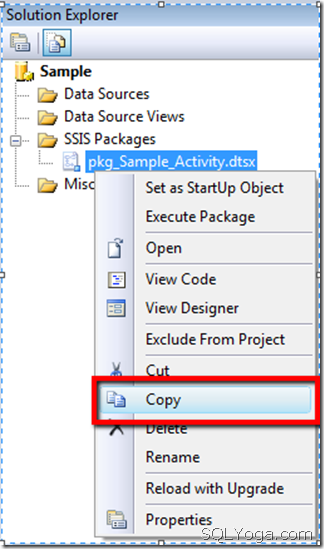

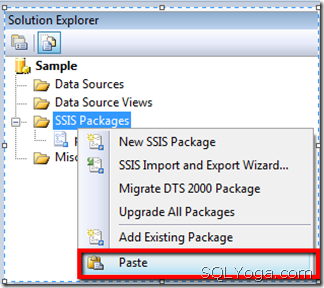

SQL SERVER: Clone SSIS Package

18+ years of Hands-on Experience

MICROSOFT CERTIFIED PROFESSIONAL (Microsoft SQL Server)

Proficient in .NET C#

Hands on working experience on MS SQL, DBA, Performance Tuning, Power BI, SSIS, and SSRS

18+ years of Hands-on Experience

MICROSOFT CERTIFIED PROFESSIONAL (Microsoft SQL Server)

Proficient in .NET C#

Hands on working experience on MS SQL, DBA, Performance Tuning, Power BI, SSIS, and SSRS

June 14, 2014

SQL Yoga : Certificate Expired, Mirroring Stopped

Database Administrators might face this issue while certificate gets expired and database mirroring (Non domain database mirroring) gets disconnected as two servers, Primary and Secondary cannot communicate with each other. The error message can be found in Log as follows:

In this post, We are going to review step by step process to resolve this issue by providing renew parameters with certificate configurations. 1. Create a new certificate with longer endpoint (on Principal): |

1: -- Create a new certificate for the endpoint2: USE master;3: CREATE CERTIFICATE [Principal_Certificate_New]4: WITH SUBJECT = 'Principal Certificate',5: START_DATE='01/01/2014', -- Provide date prior to current date6: EXPIRY_DATE='12/31/2020'; -- Provide this as future date7: GO

2. Take backup of the newly created certificate (on Principal):

1: USE master;2: BACKUP CERTIFICATE [Principal_Certificate_New] TO FILE = N'F:\Backup\Principal_Certificate_New.cer';3: GO

3. Set mirroring to use the newly created certificate (On Principal):

1: ALTER ENDPOINT DBMirrorEndPoint2: FOR DATABASE_MIRRORING (AUTHENTICATION = CERTIFICATE [Principal_Certificate_New])3: GO

4. Delete the old certificate for endpoint (on Principal):

1: USE master;2: DROP CERTIFICATE [Principal_Certificate_Old]3: GO

5. Drop the Old Certificate for Principal Login (on Mirror):

1: USE master;2: DROP CERTIFICATE [Principal_Certificate_Old]3: GO

6. Create a new certificate from the principal backup file (on Mirror):

1: USE master;2: CREATE CERTIFICATE [Principal_Certificate_New] AUTHORIZATION PrincipalServerUser3: FROM FILE = N'F:\Backup\Principal_Certificate_New.cer';4: GO

7. Create a new certificate with longer endpoint (on Mirror):

1: use master;2: CREATE CERTIFICATE Mirror_Certificate_New3: WITH SUBJECT = 'Mirror Certificate New'4: ,EXPIRY_DATE = '12/31/2020' -- Provide this as future date5: GO

8. Take backup of newly created certificate (on Mirror):

1: USE master;2: BACKUP CERTIFICATE [mirror_new_cert] TO FILE = 'F:\Backup\Mirror_Certificate_New.cer';3: GO

9. Set mirroring for newly created certificate (on Mirror):

1: USE master;2: ALTER ENDPOINT DBMirrorEndPoint3: FOR DATABASE_MIRRORING (AUTHENTICATION = CERTIFICATE [Mirror_Certificate_New])4: GO

10. Drop the old certificate for endpoint (On Mirror):

1: USE master;2: DROP CERTIFICATE [Mirror_Certificate_Old]3: GO

11. Drop the old certificate for Mirror login (On Principal):

1: USE master;2: DROP CERTIFICATE [Mirror_Certificate_Old]3: GO

12. Create a new certificate from the mirror backup file (On Principal):

1: USE master;2: CREATE CERTIFICATE [Mirror_Certificate_New] AUTHORIZATION MirrorServerUser3: FROM FILE = 'F:\Backup\Mirror_Certificate_New.cer'4: GO

13. Resume the mirroring session for each database(On Principal and Mirror):

1: USE master;2: ALTER DATABASE [Mirrored_Database_Name] SET PARTNER RESUME3: GO

By following the mentioned steps, we can resolve the certificate issues for Mirroring database and Mirroring will be resumed.

Note : 1. Always prefer to provide the endpoint date as far date, so that issue doesn’t occur very soon. 2. During this process, Primary database is available without any interruption.

Reference: Tejas Shah (www.SQLYoga.com)

18+ years of Hands-on Experience

MICROSOFT CERTIFIED PROFESSIONAL (Microsoft SQL Server)

Proficient in .NET C#

Hands on working experience on MS SQL, DBA, Performance Tuning, Power BI, SSIS, and SSRS

18+ years of Hands-on Experience

MICROSOFT CERTIFIED PROFESSIONAL (Microsoft SQL Server)

Proficient in .NET C#

Hands on working experience on MS SQL, DBA, Performance Tuning, Power BI, SSIS, and SSRS

April 17, 2014

SQL SERVER: SSIS – Merge Join Transformation

| Now, let’s have a look at functionality of Merge Join Transformation task in SSIS. Benefit of using Merge join is, input datasets can be combination of any two datasets from (Excel file, XML file, OLEDB table, Flat file).Output can be result of INNER, LEFT Outer, or FULL Outer Join on both the datasets. Merge Join Transformation has two inputs and one output. It does not support an error output. Use of Merge Join Transformation: Merge Join is a two-step process. First step is to sort both the input datasets(tables) in the same order, and the second step is apply merge join on the common key.Here rows from both the sorted inputs get matched together. To Understand Merge Join Transformation in better way, lets take an example with various configuration parameters in SSIS. 1. Create sample tables: Now we will create input tables named “Department” and “Employee” in Test database. 1: CREATE TABLE Department2: (3: Dept_No INT4: ,Dept_Name VARCHAR(50)5: ,Location VARCHAR(50)6: CONSTRAINT PK_DEPT PRIMARY KEY (Dept_No)7: )8:9: INSERT INTO Department VALUES (10, 'ACCOUNTING', 'Mumbai')10: INSERT INTO Department VALUES (20, 'RESEARCH', 'Delhi')11: INSERT INTO Department VALUES (30, 'SALES', 'Mexico')12: INSERT INTO Department VALUES (40, 'OPERATIONS', 'Sydney')13: GO14:15: CREATE TABLE Employee16: (17: Emp_No INT NOT NULL18: ,Emp_Name VARCHAR(100)19: ,Designation VARCHAR(50)20: ,Manager INT21: ,JoinDate DATE DEFAULT GETDATE()22: ,Salary INT23: ,Dept_No INT24: CONSTRAINT PK_Employee PRIMARY KEY (Emp_No)25: ,CONSTRAINT FK_Dept_No FOREIGN KEY (Dept_No) REFERENCES Department(Dept_No)26: )27:28: INSERT INTO Employee29: (Emp_No,Emp_Name,Designation,Manager,Salary,Dept_No)30: VALUES31: (101, 'Tejas', 'MANAGER', 104, 4000, 20)32: ,(102, 'Michel', 'ANALYST', 101, 1600, 30)33: ,(103, 'Mark', 'DEVELOPER',102, 1250, 30)34: ,(104, 'James', 'DIRECTOR',106, 2975, 10)35: ,(105, 'Raj', 'ANALYST',7566, 3000, 20)36: ,(106, 'TechnoBrains', 'PRESIDENT', NULL, 5000, 40)37: GO 2. Create Data Source Connection: Select and drag “Data Flow Task”, from “Control Flow Items” to designer surface. Then double click it and Create a New OLEDB connection. 3. Select Input Data Sources: Select two different Data Sources which you need to perform merge join on as “OLE_SRC_Employee” and “OLE_SRC_Department”. Create a new “OLEDB Connection” to map it to the source datasets. 4. OLEDB Source Editor: Now double click on “OLEDB Source”, it will open “OLEDB Source Editor” in that provide table configuration parameters and columns mapping from “Columns” tab. 5. Data Sorting: As the Merge Join Transformation accepts the sorted data as input, we will add the sort transformation in the flow. If you know that the data is already sorted then you can set “isSorted” Property as “True” in the “Advanced Editor” for OLEDB Source of the respective dataset. Or else you can use the Sort Transformation task from “Data Flow” Transformation. Now we need to add two Sort components and join the green arrow pipeline from “Employee” to one of the sort transformation and other pipeline from “Department” to the other Sort Transformation.

6. Sort Transformation Editor Source 1: In order to get sorted data, Double click on the “Sort Transformation” that we have connected to “Employee” Dataset to provide the key on which you want to perform sort so that data gets re-ordered in sorted form based on the keys provided. Provide the Sort type as well as sort order if there are multiple keys on which Sort operation will work.

7. Sort Transformation Editor Source 2: Now we have “Employee” table data in sorted form, in the same way need to configure the sort transformation for Source 2 “Department”. For the same double click on the “Sort Transformation” which is connected to “Department” dataset, to provide the Sort key and order in which you want to perform the sort in “Sort Type” property in Editor. Please keep in mind that the Sort type for both the source needs to be of the same type. i.e. any one of ascending or descending order. 8. Merge Join Task Component: Now we will add Merge Join Transformation, so that we can join both the sources together.Drag the pipeline from Employee sort to Merge Join. In “Input Output Selection” popup select Output as “Sort Output” and Input as “Merge Join Left Input”. In Input user has two options as

Using this two options user can specify whether the input needs to be considered as left or right side dataset result. Now you need to drag the pipeline from other “Sort transformation” and connect it to “Merge Join Transformation” as second input. While connecting the second input to the Merge Join, it will not ask for the input type as you have already provided it for the first pipeline, so by default it will select the other type of input to the Merge Join. i.e. Left or Right accordingly.

9. Merge Join Transformation Editor: In order to configure merge join double click on the “Merge Join Transformation” to open the Editor.You need to provide the Join Type to specify which type of join operation you want to perform on the selected dataset. Different Join types are:

Here we will select the “Inner Join” as Join Type as we need to display data from both the datasets. Select “Dept_No” as Join Key as it is the common field on which we can merge two datasets data. 10. Result table creation: We need to create a table to store the output result into Test database as per the script provided. 1: CREATE TABLE [Merge_Join_Output]2: (3: [Emp_No] INT,4: [Emp_Name] VARCHAR(100),5: [Designation] VARCHAR(50),6: [Manager] INT,7: [JoinDate] DATE,8: [Salary] INT,9: [Dept_No] INT,10: [Dept_Name] VARCHAR(50),11: [Work_Location] VARCHAR(50)12: )13: GO 11. Select “OLEDB Destination Editor” to redirect your output to the “Merge_Join_Output” table as shown. In “Mappings” tab map the output columns accordingly.

12. Package Execution: Execute the package and check for the results in the “Merge_Join_Output” table. 13.Result in database After successful execution of the package, we can check the result in “Merge_Join_Output” table. Query: 1: -- OLEDB Table 12: SELECT * FROM Employee3:4: -- OLEDB Table 25: SELECT * FROM Department6:7: -- Output data after Merge Join Operation8: SELECT * FROM Merge_Join_Output9: GO SQL Result:

In this way we get the Merge Join result by combining both the tables data based on common data, such that it becomes easier to navigate information from the single merged table, instead of referring two different tables and link the related data. Reference: Tejas Shah (www.SQLYoga.com) |

18+ years of Hands-on Experience

MICROSOFT CERTIFIED PROFESSIONAL (Microsoft SQL Server)

Proficient in .NET C#

Hands on working experience on MS SQL, DBA, Performance Tuning, Power BI, SSIS, and SSRS

18+ years of Hands-on Experience

MICROSOFT CERTIFIED PROFESSIONAL (Microsoft SQL Server)

Proficient in .NET C#

Hands on working experience on MS SQL, DBA, Performance Tuning, Power BI, SSIS, and SSRS